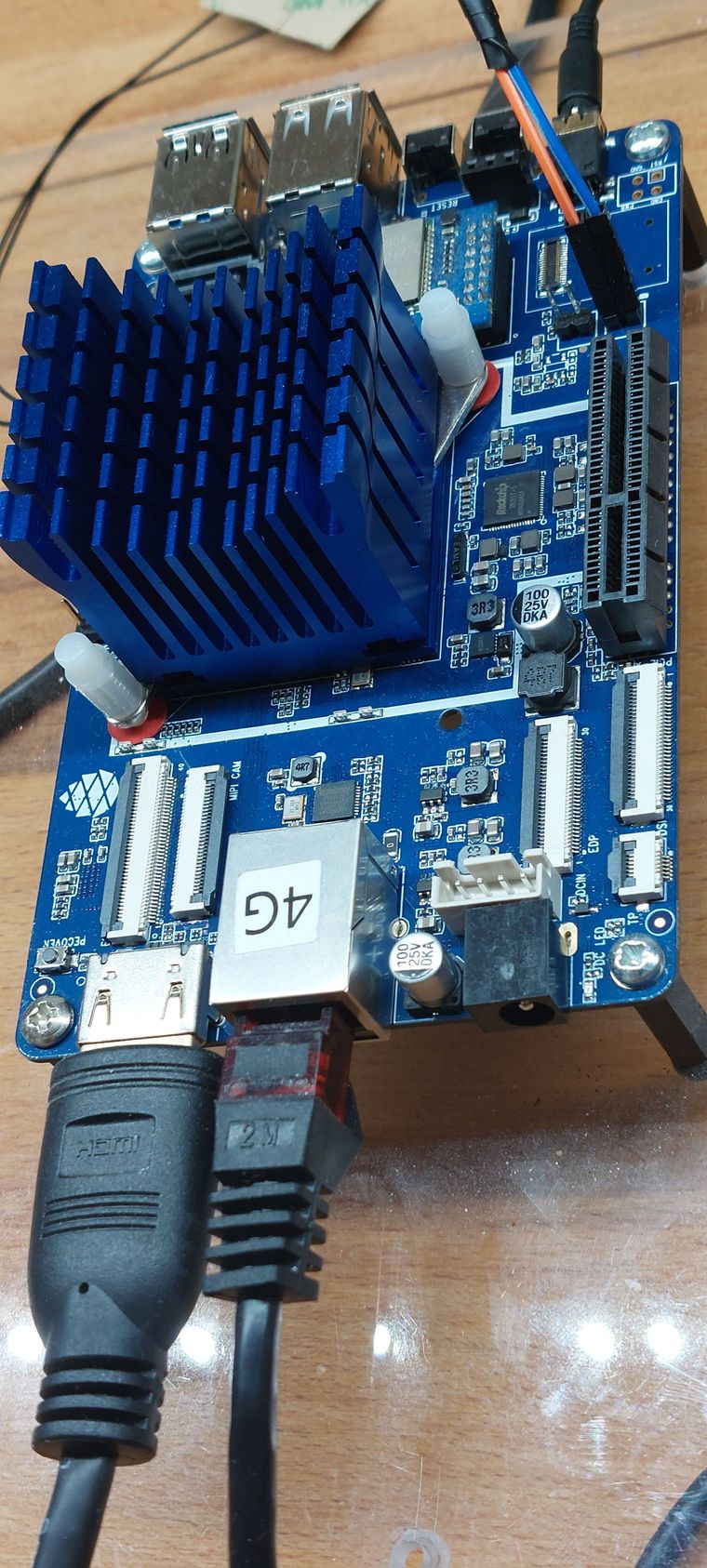

Das Ergebnis

Screenshot_20211128_090851.png

Peters Image benutzt ein Debian Buster 10

root@quartz64:~# lsb_release -a No LSB modules are available. Distributor ID: Debian Description: Debian GNU/Linux 10 (buster) Release: 10 Codename: busterKernel

root@quartz64:~# uname -a Linux quartz64 5.16.0-rc2 #1 SMP PREEMPT Sat Nov 27 03:43:14 UTC 2021 aarch64 GNU/LinuxGut an diesem Image vom Peter ist der funktionierende PCIe Port 🙂 Nicht so gut ist das Image, wenn man längerfristig damit arbeiten möchte. Der Aufbau ist dafür nicht wirklich gut geeignet. Wenn man das möchte, dann benutzt man lieber das Manjaro Image. Das wird gepflegt und man bekommt alle Änderungen und Patches auch geliefert.

Ich hoffe, das die PCIe Patches auch bald im Manjaro Image ankommen, doch dafür müssen diese Upstream sein weil das Manjaro diesen Kernel nutzt (linux-rc). Und wie ich heute Morgen gelesen habe, es besteht Hoffnung für einen funktionierenden USB3-Port 🤗